Hey everyone

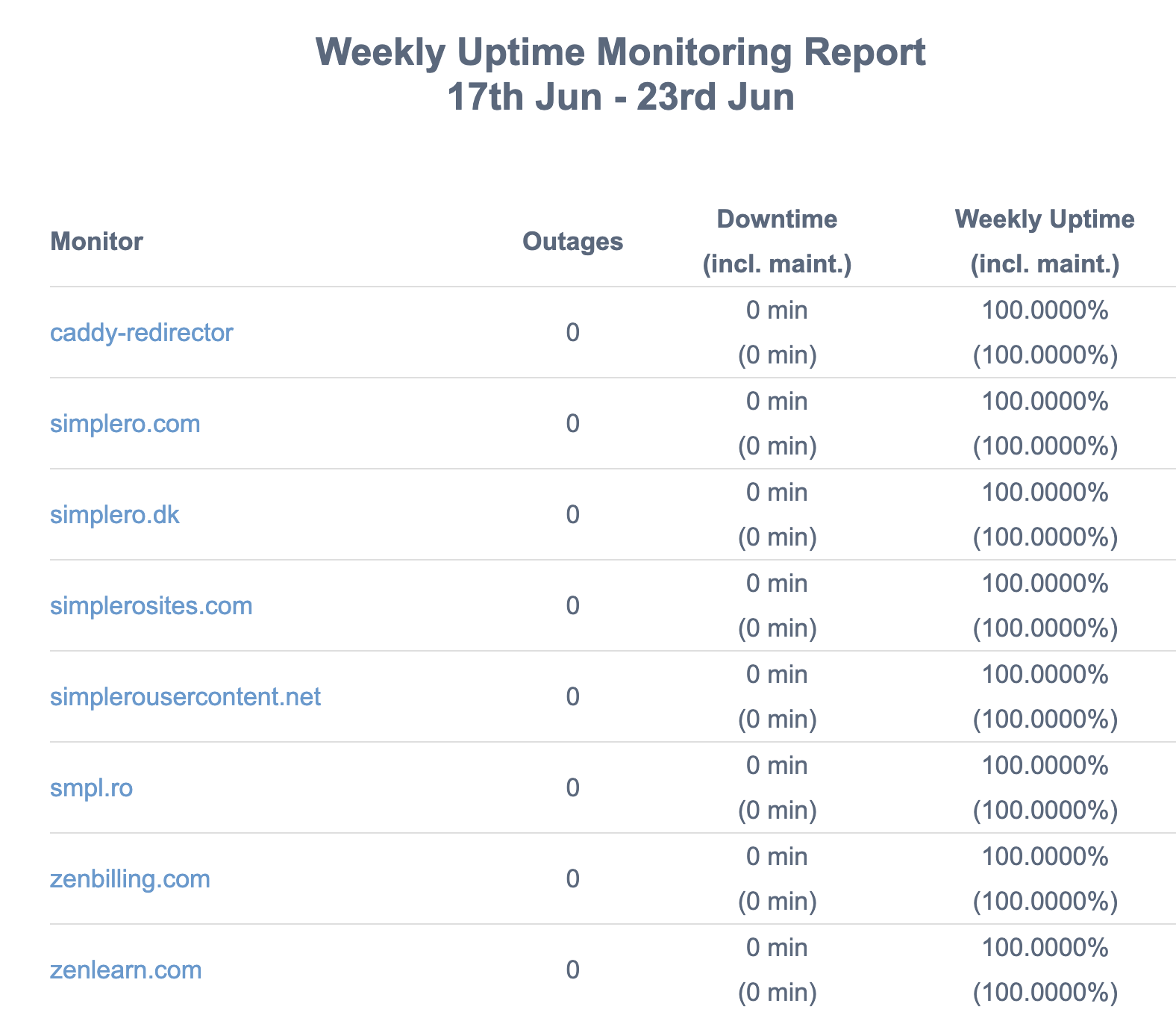

As you may have noticed we had some downtime last week, and that sucks.

We had one instance where our database server became wholly unresponsive, and we had to restart it.

Thankfully, database servers are designed to be extremely resilient in the face of having to be forcibly shut down, so everything came back up clean and fast.

There was no data loss at the database level, but there was obviously some at the application level, meaning that anyone trying to opt in, buy, or access your pages, as well as all you admins trying to access simplero, they weren't successful during this downtime.

After the main event, there were 3-4 smaller blips that lasted only a few minutes each, and recovered quickly on their own.

At first, we were quite at a loss at what caused this, but after really doing some intensive research and digging, our crack team of engineers identified the root cause.

It's an old process that has been running every six hours forever, and which does a lot of very complicated analysis to identify potential spam opt-ins.

Once we identified the culprit, it was easy to fix.

The silver lining in all of this is that this event got us to take a harder look at a bunch of other performance issues that we can address,.

Two of our engineers showed a strong interest in and aptitude for this kind of work, which I didn't know they had. I've asked them both to spend a day a week on everything performance and infrastructure.

We call it "Fast Fridays." 🏎️

Thank you all for your patience and understanding.

We take uptime very very seriously, and I'm glad we were able to not only fix what caused the downtime last week, but also that this caused us to improve our performance optimization more broadly.

~2.jpg)

Comments